Optical power is to fiber optics like voltage is to the electrical field—it is the primary measurement unit and is fundamental to almost all other measurements. Optical power meters are used to measure transmitter output and receiver input when testing fiber optic links. Paired with a test source, a meter measures loss of a cable plant or any of its components. Even OTDRs measure optical power to calculate loss.

Somewhere in the back of the manual of every fiber optic instrument is a section on calibration, usually advising that the instrument be calibrated annually. Some contracts, especially those with government or military customers, call for proof that every instrument used in the project is calibrated.

So, what does calibration mean, and why does it matter? Let’s start with optical power and fiber optic power meters. And let’s start with a story.

Back in 1980, I started a company called FOTEC to make fiber optic instruments. We were one of the first companies in the world—and in the entire fiber optic industry—in that business. I had a long background in the test equipment business, and my partners were an experienced electronic engineer and an optical scientist at MIT.

When we started building fiber optic power meters, the only standards for optical power were those used to measure the output of other light sources such as incandescent and fluorescent lamps, LEDs, and lasers. We used equipment at MIT to set up a transfer standard we could use to calibrate our power meters.

Less than a year later, we ran into a problem. Two companies working on a military contract were having problems with a link working consistently. One company developing the transmitter end used a Japanese power meter, and the other company developing the receiver used our meters.

It turned out the Japanese meter and our meter were about 3 decibels (dB) different in calibration—a factor of two in optical power.

I had recently joined the EIA standards committee developing the first standards for fiber optics, and one of the other participants worked at the U.S. National Bureau of Standards (NBS; now called the National Institute of Standards and Technology, NIST) in Boulder, Colo. I brought this to his attention, and we got a commitment from NBS after some negotiation to develop a calibration standard for fiber optic power like their standards for volts, wavelength, length, weight, etc.

It was not an easy task. It took almost five years to complete. In the end, an NBS-calibrated laboratory power meter and three test sources at the wavelengths most used in fiber optics (850, 1,300 and 1,550 nm) were ready to be sent to calibration labs, fiber optic instrument manufacturers and users for calibration of their equipment.

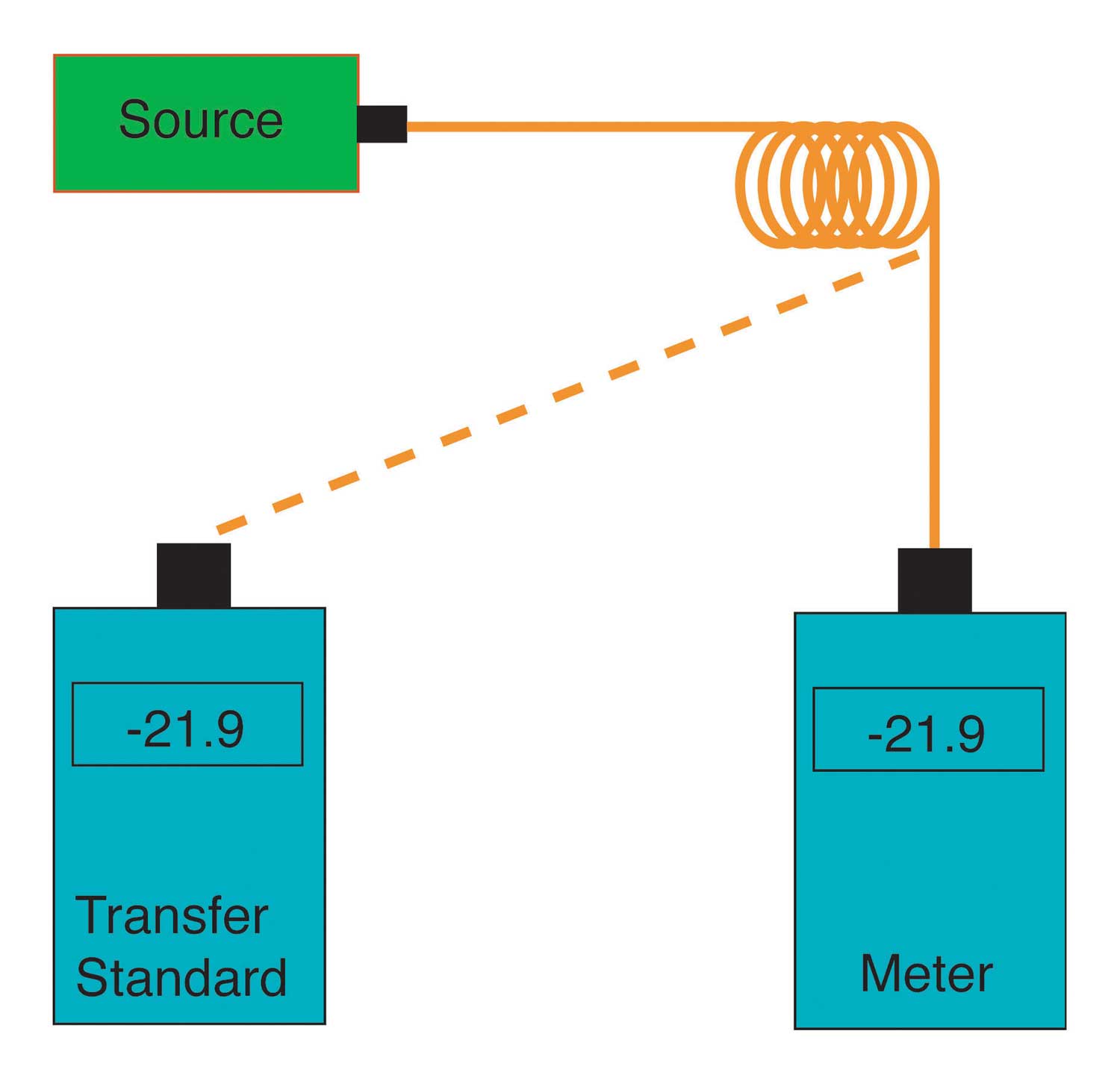

The process of calibration today is the same as developed then. A lab requests the NIST calibration standard be sent to them, and they use it to calibrate their internal lab standards, called their transfer standards. They use their transfer standards to calibrate the instruments they sell and the instruments sent in by customers for recalibration.

The actual calibration process is simple. Measure a source with a transfer standard, then calibrate the meter to read the same value. The transferred calibration has a worst-case uncertainty of less than 5%, or 0.2 dB.

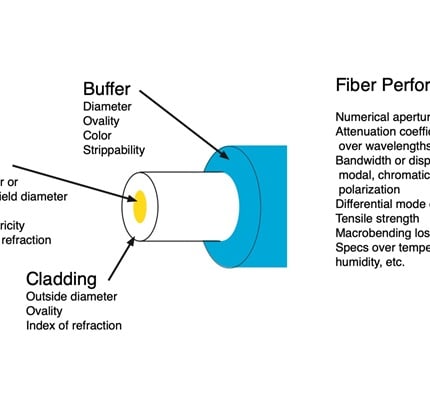

The process needs to be repeated for each calibration wavelength. The detectors in a fiber optic power meter are semiconductors that have a very strong wavelength dependence.

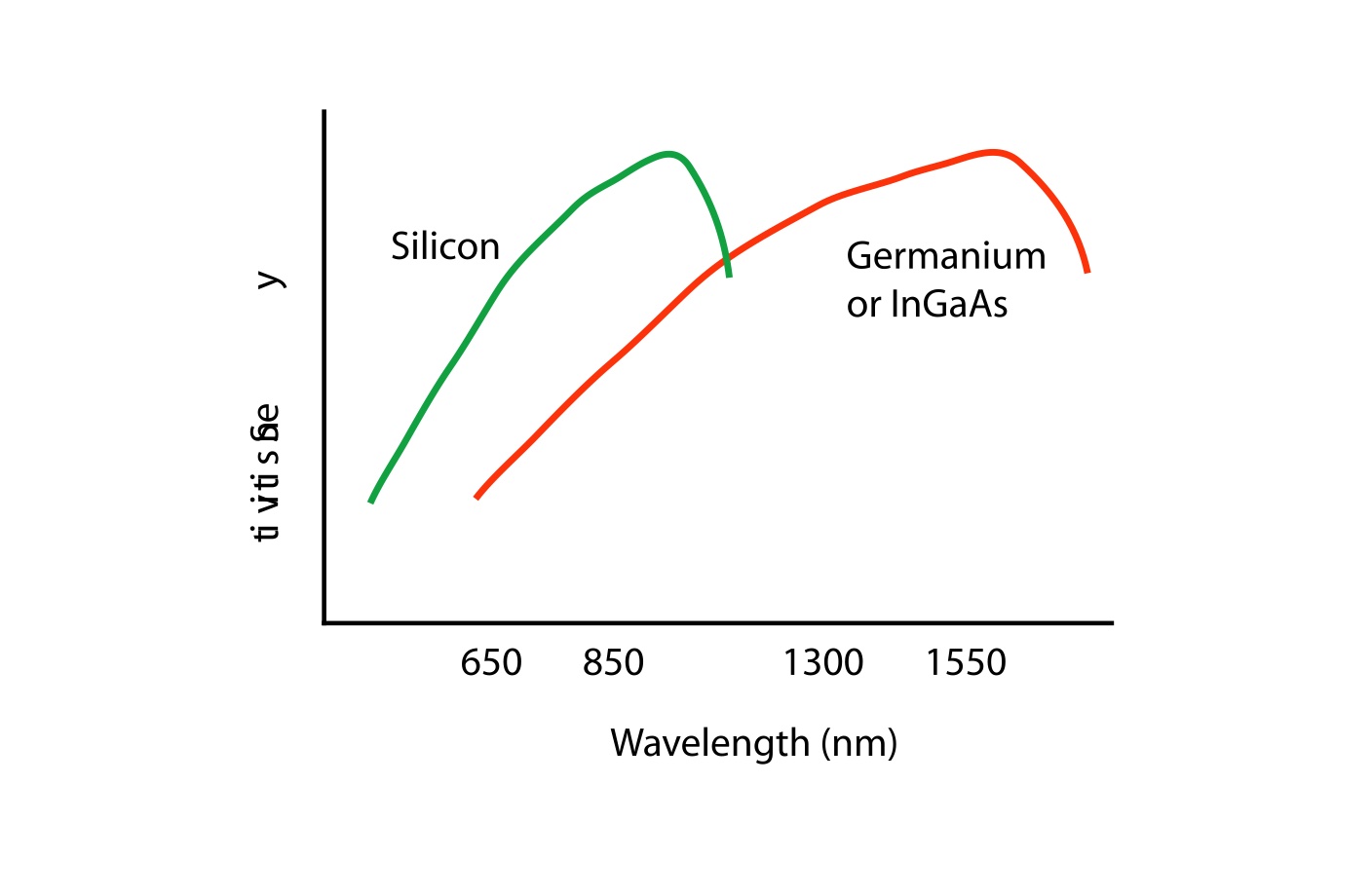

Since most fiber optic systems operate in the range of 850 to 1,550 nm, most meters use indium gallium arsenide (InGaAs) detectors because they have better noise specifications than germanium. Silicon detectors are use in devices measuring visible light since the human eye sensitivity peaks around 600 nm.

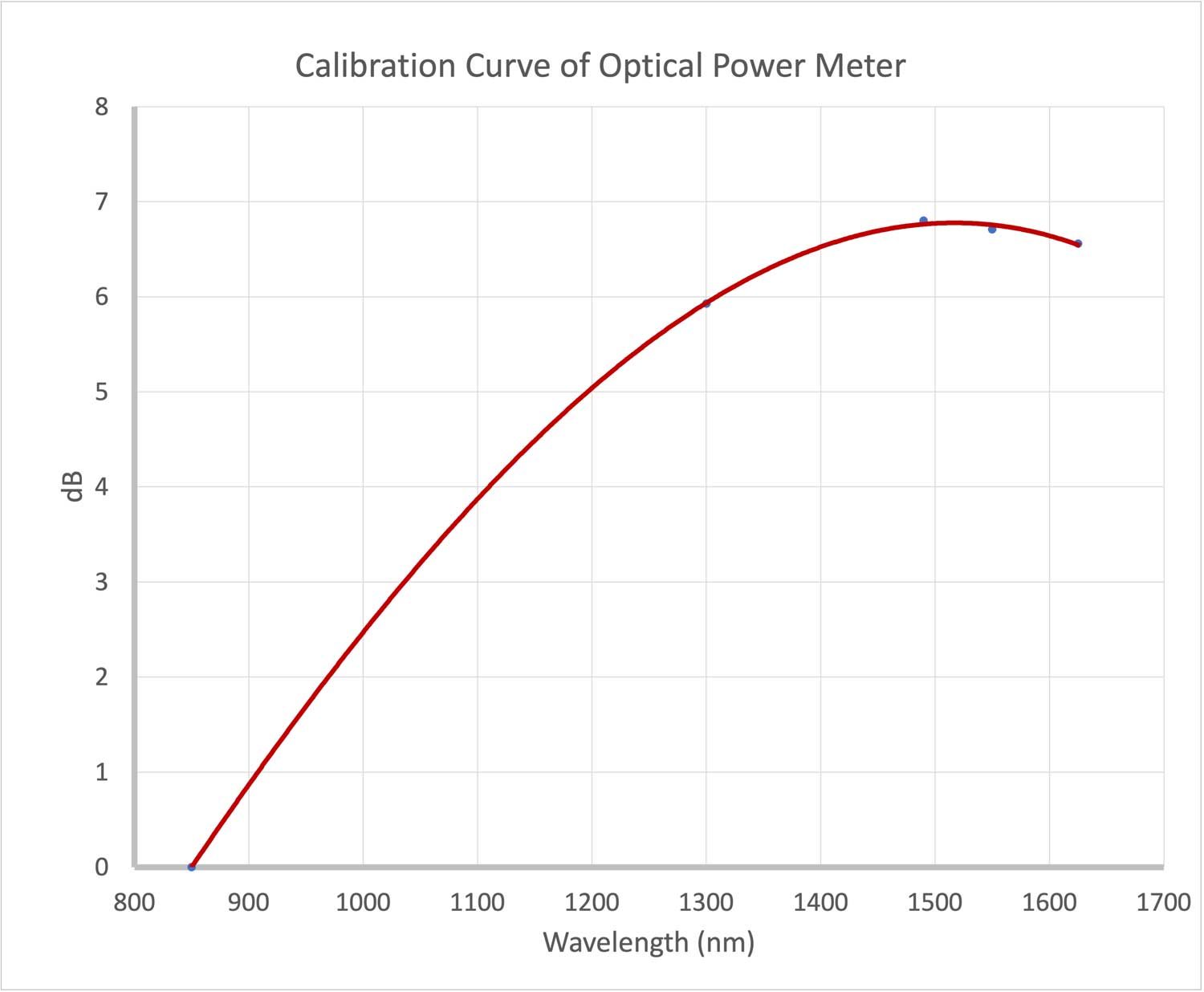

The difference in calibration of a fiber optic power meter over wavelength is big. You can test it for yourself. Connect a meter to a source of any wavelength and cycle through the calibration wavelengths on the meter. You get a graph like this one, which we made by testing one of our power meters that has an InGaAs detector.

The difference between 850 and 1,550 nm is almost 7 dB—a factor of almost 5 times. When you consider the meter calibration is good to 0.2 dB, it becomes very important to ensure that the meter calibration wavelength is set properly when making measurements to avoid large errors.

What’s a proper interval for recalibration? The instruction manual and some contracts call for instruments to have been recalibrated within the last year. That’s not unreasonable. But we have experience with some of our own instruments that are 20 years old and still in calibration, so if you forget, it probably isn’t going to cause big problems.

However, let this story be a reminder. It’s time to get those instruments calibrated.

About The Author

HAYES is a VDV writer and educator and the president of the Fiber Optic Association. Find him at www.JimHayes.com.